Applying several computers to a single problem simultaneously is known as grid computing. Commonly used for scientific or technical challenges requiring a great number of computer processing cycles or simultaneous access to large amounts of data, a primary grid computing application is using software to divide and apportion pieces of a program among several computers.

Applying several computers to a single problem simultaneously is known as grid computing. Commonly used for scientific or technical challenges requiring a great number of computer processing cycles or simultaneous access to large amounts of data, a primary grid computing application is using software to divide and apportion pieces of a program among several computers.

Automation architectures have evolved by emulating IT infrastructure and by adapting the intellectual property of IT implementations to develop systems that meet the needs of automation users. Applying automation developments to conveyance in industrial manufacturing facilities, warehouses and distribution centers suggests a convergence of automation architecture evolution with grid computing concepts.

Distributed logic is the most recent IT architectural offspring that is already moving the automation community away from a centralized logic architecture to putting just enough computing power at the mechanical prime mover — such as motor starters and drives that operate conveyors. This, in turn, makes processes faster because decision making is carried out by distributed processing solutions.

Designing a system in which a PLC makes decisions for an entire system, but delegates some of the logic to local components, which would increase response times and allow the PLC to tackle other tasks, is a viable concept. The best news is that the potential of having decentralized, distributed computing — and its benefits — is within reach. Although the premise is sound, what is needed to complete the equation includes:

-

A common software platform for hardware compatibility, regardless of the manufacturer

-

Implementation of common hardware that supports the distributed software

-

A common configuration and engineering tool such as Field Device Tool/Device Type Manager (FDT/DTM)

-

An open, highly available network.

-

Beyond component response time and productivity, perhaps the key benefit of decentralized, distributed computing would be the ability to implement a network infrastructure without worrying about network speed and throughput. With distributed intelligence, having a high-speed network to resolve logic isn’t as necessary as having a high-availability network — transforming today’s concept of a control network into more of a straight reporting mechanism. Other benefits will also be discovered as OEMs and their end-user customers embrace the concept and set the example.

Distributed computing

Today, most machine processes rely on a PLC to read the inputs, resolve the logic and set the outputs. For example, consider a conveyor in a deterministic material handling process that uses a motor starter linked to an optical sensor. When a box triggers the sensor, the information is sent to a PLC, which reads it and starts the motor associated with that portion of the process, and then stops it when the box has passed. Although much is happening, all inputs are done one at a time, which also means the PLC cycle time (and if applicable, network travel time) directly correlates to response time.

Response time translates to productivity; the faster a conveyor can run and collect information, the more productive a facility will be. Obviously, much of that has to do with mechanical design, but with so many steps that must occur, empowering components to make control decisions locally and report status over an open, highly available communication network would increase response times and consequently increase productivity.

Such a decentralized format would also increase flexibility for facility managers. For example, if control is distributed to individual VFDs and motor starters in a three-conveyor configuration, and one of the lines goes down for some reason, the other two lines can continue working unencumbered, and could even theoretically take on the productivity of the affected line.

Software, network shifts

While engineering VFDs, motor starters and other I/O devices with logic-resolving capabilities would be one key success factor, others would be the development of an agreed-upon industry software standard and implementation of a high-availability network.

As with any component used in a manufacturing facility, warehouse or distribution center, OEMs have personal preferences for suppliers and components they choose — customer standards notwithstanding. A decentralized, distributed computing format would benefit from a holistic approach to software, where suppliers work together to develop a common standard so compatibility among products from multiple suppliers is guaranteed.

One organization that could head up a standard for a common platform is the CoDeSys Automation Alliance, an organization that advocates the use of a specific open software platform. The CoDeSys Automation Alliance unites companies of the automation industry, whose hardware devices are programmed with the IEC 61131-3 programming system, known as CoDeSys. Cooperation among hardware and software companies in the automation industry is the underlying concept of CoDeSys.

CoDeSys offers the ability to use a single system for programming and data exchange among all compatible components in the network. The software would no longer be adapted to the hardware by customization. Instead, it would be parameter-driven by using configuration files. Parameters such as memory model, memory layout and CPU settings could be changed without even touching the sources. Concentrating on one standard, common software version would ensure quality management and reduce the need for support.

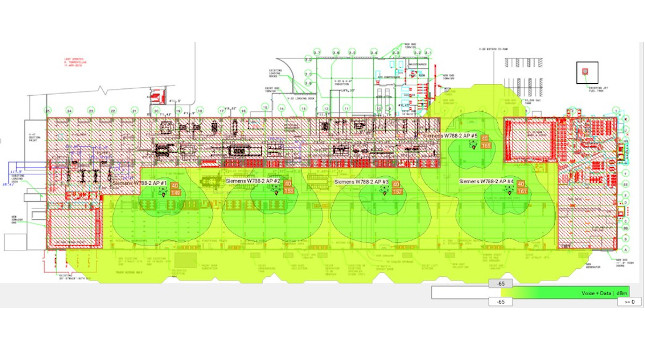

Likewise, networking and communications would also have to undergo a shift, but the technology is already available. Today’s networking mode of operation requires a high-speed network to process small — but constant — packets of control information in a deterministic fashion. When logic is shifted from a centralized PLC to individual I/O nodes, the network won’t require the same speed because it’s no longer carrying that control information. However, the network must be highly available because those individual nodes need to constantly report back over the network to a SCADA system, particularly for alarms, alerts and productivity information, in addition to communicating with each other to keep processes moving. A logical network option for this approach is open Ethernet (IEEE standard 802.3).

The current centralized format is deterministic, and, as such, networks such as PROFIBUS and CANopen are typically used because of their high speed and high throughput capabilities. But in the future, Ethernet is best-suited for a decentralized, distributed computing format for several reasons — not the least of which are integration within the rest of a facility’s IT system, and the ability to use spare parts universally (i.e., in both the office and industrial environments). Using a facility’s IT staff to service Ethernet throughout the plant would increase efficient use of personnel.

Ahead of the curve

One of the main ways to increase productivity — particularly in conveyance — is to increase I/O device response times. While the current centralized format works adequately, a decentralized, distributed computing format would exceed current capabilities — and is already being done to varying degrees.

Facility managers and OEMs can continue to turn the concepts of decentralized, distributed computing into reality by working with trusted automation and control suppliers that specialize in material handling, logistics and conveyance components. Those firms can provide further insight into where the state-of-the-art currently stands and where it is headed. First adopters stand to put themselves in a position to reap the benefits sooner.

Conveyors abound in industrial manufacturing facilities, warehouses and distribution centers. As automation architectures evolve, advanced concepts such as grid computing and standardization of software and network platforms could improve conveyor system efficiency.

One way to increase the productivity of conveyors within a facility is to increase the response time of the PLC-controlled conveyor components, which depends more on network availability than it does network speed.

Author Information David Voynow has worked for the Schneider Electric North American Operating Division for two years as material handling equipment market segment manager.