As manufacturers continuously seek new ways to improve industrial network up-time on the plant floor, new innovative monitoring devices and diagnostics tools are being made available to meet this goal. In fact, preventative network diagnostics is on the rise, and this trend can significantly help companies cut overall production costs, minimize downtime and increase efficiency.

As manufacturers continuously seek new ways to improve industrial network up-time on the plant floor, new innovative monitoring devices and diagnostics tools are being made available to meet this goal. In fact, preventative network diagnostics is on the rise, and this trend can significantly help companies cut overall production costs, minimize downtime and increase efficiency.

When a network fault occurs on the plant floor, a production failure often ensues. Enlightened organizations have been adopting cutting-edge preventative diagnostics on the plant floor for years, so it’s easy to understand why many of them would want to extend this program to industrial networks.

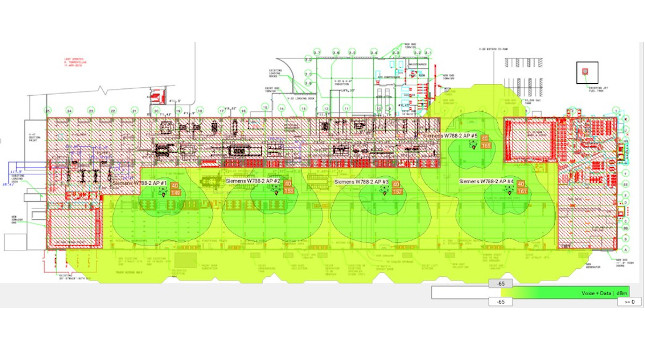

Preventative diagnostics allow you to get an early snapshot of a network which is likely to cause problems. Use of a secondary high-speed plant floor network (typically Ethernet), to monitor the data from the primary network, allows manufacturers to collect and analyze network data throughout a plant. This technology provides the ability to significantly reduce, and even prevent, unplanned network downtime by taking steps to correct out-of-tolerance networks before they shut-down in a fault condition.

Plant floor significance

Why is network monitoring especially important on the plant floor? In contrast to the office environment, where brief communication loss is somewhat tolerable, communications is the lifeline of a plant. Industrial communication systems are often used to provide critical data for meeting regulatory requirements in certain industries. If your network is not communicating, then your organization feels the financial effects of lost production.

With some obvious overlap, network diagnostic tools fall into three broad categories:

-

Network configuration and diagnostic tools

-

Network protocol analyzers

-

Network physical layer signal analyzers

-

The first two sets of tools are useful in diagnosing issues once the network has gone into a fault state, and they may provide limited warning of impending issues. The last one is more useful in understanding physical layer issues that can ultimately result in network faults. For example, the following digital storage oscilloscope captures illustrate how a CAN (controller area network) signal can become distorted due to a typical media breakdown issue on a DeviceNet network.

In order to detect physical layer issues it is desirable to compare current network parameter readings to those for a network known to be “good”. This is best done by taking a “baseline” reading at the time the network is commissioned (in pristine condition) or comparing readings to recommendations for the network in question (i.e. ODVA’s recommended tolerances for DeviceNet). Continuous comparison of readings to the baseline or standard allows for a proactive response to out-of-tolerance network parameters, often before complete network failure.

The use of Ethernet to continuously monitor the data on the primary network means that this information can be provided to common PLC/PAC or PC monitoring systems. Software tools are available for use on PCs to enable easy data display and provide suggested causes for out-of-tolerance parameters. The data can also be stored to historian software for future analysis.

With state-of-the-art network diagnostic tools, you can connect to and monitor what is happening to your network on a consistent basis, to help ensure top efficiency on the plant floor.

Good CAN Waveform

Bad CAN Waveform (entire frame)

Bad CAN Waveform (one data bit)

A second high speed network can collect an analyze data from the plant floor.

Author Information Michael Frayne is a Product Manager for network interfaces at Molex Inc., based in Waterloo, Ontario. Plugging the plant security gap

There has been a battle going on in the process automation market since the U.S. government started its Homeland Security program following 9/11. As a consequence, all operators of critical and/or potentially dangerous processes and assets such waterworks, substations, oil and gas industry, chemical industries etc. had to prove that they are protected against external attacks, especially those carried out over the Internet.

During these investigations, the government learned something that those of us in the industry knew for years — software and the IP stacks implemented in today’s process control systems are extremely vulnerable. Sometimes just a short time network load of more than 10% or 20% can influence or even stop the whole process. At the moment, operators and organizations like NAMUR and NERC are putting pressure on the controller vendors requiring secure control systems.

The goal these organizations have been working on is a commonly accepted test method for industrial devices and a classification similar to the safety SIL levels. Those Security Assurance Levels (SAL) are now being defined in part 4 of the ISA 99 standard.

Since Feb 5, 2009, we have a new standard in the process industry backed by some big players and endorsed by the U.S. government and the Department of Homeland Security in particular. Implementation is causing some companies to scurry a little as there are some difficult pieces in the standard, especially for legacy systems. However there is little doubt this is how security will be handled going forward and that the big controls companies will make their process automation systems compliant with this security standard.

The big question is, will SAL certification cross over to the plant floor people who are worried about security and eventually even dovetail with SIL certifications they are having to deal with in implementing safety networks? The only reason it will not is that commercial IT standards may prevail instead!! If discrete and process diverge in how they handle security because of this it will become a pain in the butt for users and vendors who have to make sense of two very different approaches.

This will be another front in the war between IT and Automation people in the coming years. It’s too early to see who will win but it sure will be an interesting fight. It is clear to me the next few years are crucial to defining security on the plant floor.

Ed Nabrotzky is the global product manager for Molex.