Today's plant engineers are sometimes required to develop data acquisition systems that monitor, measure, or maintain processes or tests within their plants. Data acquisition systems provide the necessary information to determine optimized production conditions, preventive/predictive maintenance, and emergency repair or replacement of equipment.

Key Concepts

Data acquisition systems acquire, analyze, and present information.

Sampling rate is inversely proportional to resolution.

PXI is much better suited for harsh operating conditions than a standard desktop PC.

Sections: Maintaining industrial equipment Acquire the data Analyze the data Present the data Data acquisition platforms More Info:

Today’s plant engineers are sometimes required to develop data acquisition systems that monitor, measure, or maintain processes or tests within their plants. Data acquisition systems provide the necessary information to determine optimized production conditions, preventive/predictive maintenance, and emergency repair or replacement of equipment.

The challenge in developing data acquisition systems is determining what type of system to use for a given set of measurements. This article defines data acquisition, explains its uses, and identifies architectures and platforms for industrial applications.

Maintaining industrial equipment

Industrial equipment must continually operate at peak performance with minimum maintenance downtime to ensure maximum productivity. Although industrial equipment can require a large capital investment, routine equipment maintenance or repair can also represents significant cost — especially when the repair is premature or unexpected. A data acquisition system can be used to test, measure, and monitor industrial equipment to minimize unnecessary repair and significantly prolong equipment lifespan.

Frequently, tests and measurements are required to ensure optimal operation and minimal downtime of industrial equipment. These measurements include pressure, temperature, flow rate, power monitoring, vibration, and load. Plant engineers can use vibration measurements from accelerometers to detect unbalances and other anomalies in shaft rotation of gas turbines or other rotating equipment, which could indicate insufficient lubrication, damaged bearings, or misaligned gears. They can use electric motor voltage and current measurements to monitor power consumption relative to applied load. They can use temperature data from thermocouples or RTDs to determine heat variations, which indicate excessive friction, in cylinders of compressors and pumps. Industrial manufacturing plants have many physical and electrical parameters associated with many processes and systems — most of which at some time must be quantified.

The challenge for plant engineers is to determine what data acquisition architecture is best suited for the array of measurements required to successfully monitor the health of industrial equipment. Typical data acquisition consists of these three steps:

Acquire the data (measure the signal)

Analyze the data (process the data)

Present the data (log the data)

Acquire the data

To determine which type of acquisition hardware is best suited for a given measurement system, evaluate the following points:

What is the sampling rate required by the signals you are measuring? — Data acquisition devices have varying sampling rate capabilities. Sampling rates can range from as low as 1 Hz (one sample per second) to 1 MHz (one million samples per second), depending on the measured signal. A good rule of thumb for determining the minimal sampling rate for a given signal is the Nyquist Theorem. The Nyquist Theorem states that signals should be sampled at 2-times the frequency of the measured signal to get a satisfactory representation of the acquired signal. For a more precise measurement, it is recommended that a signal be sampled at 5—10 times its frequency. For example, a turbine shaft that rotates at 6000 rpm has a rotational speed equivalent to 100 rotations per second (rps), or 100 Hz. To adequately characterize the rotation of this shaft, the Nyquist Theorem recommends a sampling rate of at least 200 Hz. Data acquisition experience suggests that 500—1000 Hz is an optimal sampling rate for accurate representation or characterization of this acquired signal.

How precise must your data acquisition device be for the signals you are measuring? — For commercial off-the-shelf analog-to-digital (A/D) technology, sampling rate capability is inversely proportional to accuracy or resolution (Fig. 1). The accuracy of most data acquisition hardware is between 12 bits and 24 bits. For example, a high-precision 24-bit device, which may be necessary to adequately characterize a slow-changing temperature, may have a maximum sampling rate of only 60 Hz. Conversely, an 8-bit device may have a sampling rate as high as 1 gigasamples per sec (GS/s).

What types of sensors or transducers are needed for your signals? — Sensors and transducers are essential to any data acquisition system. They convert physical phenomenon such as temperature, pressure, or vibration to an electrical signal — usually voltage — that can be measured by a data acquisition device. Some examples of sensors and transducers include thermocouples, accelerometers, strain gauges, and flowmeters.

Do your signals require conditioning? — Most sensors or transducers produce signals that have very small amplitudes, include unwanted environmental noise, and contain common-mode voltage errors caused by differences in ground potentials. Signal conditioning compensates for these unwanted occurrences using signal amplification, filtering, and electrical isolation. For example, most data acquisition applications are subject to 60-Hz noise caused by power lines or electrical machinery. By introducing a signal-conditioning device with the appropriate filter, rejection of unwanted 60-Hz noise is achieved and the quality of the desired signal is improved.

In what kind of environment will your data acquisition system be operating? — Operating location, packaging size (footprint requirements), ruggedness, and environmental harshness dictate the type of data acquisition hardware used. Data acquisition devices are available in a variety of sizes, have varying ruggedness characteristics, and are usually rated according to operating temperatures, vibration, and functional shock. For example, highly rugged devices, such as programmable automation controllers (PACs) have physical dimensions similar to those of typical PLCs, can function in environments up to 158 F, and are compliant with Class 1, Division 2 specifications for harsh environment operation.

Analyze the data

Depending on the type of data acquired, various post-acquisition algorithms can be applied to fully characterize a signal. For example, Fast Fourier Transform (FFT) is an analysis method that calculates the frequency components of a time-domain signal (Fig. 2). FFT calculations are used for applications such as observing vibration frequencies in rotational machinery or characterizing the cleanliness of the ac power output from a generator.

For vibration measurements, additional software analysis tools include orbit plots, polar plots, and Campbell plots. For temperature or pressure measurements, statistical algorithms such as time-average, maximum value, and minimum value can be performed. Software filtering may be necessary to remove unwanted signals from the data. Many software applications, including graphical programming languages, incorporate specific tools for runtime or post-acquisition analysis for quick, efficient, and powerful signal analysis.

Present the data

Data presentation may include displaying the data on a human-machine interface (HMI), logging the data to a storage location such as a hard disk drive or CompactFlash device, or sharing the data over a network.

Stored data are used to evaluate optimized operating conditions, or evaluate predictive maintenance or troubleshooting criteria. For further processing and analysis, data can be directed to software programs such as Microsoft Excel or other data management software, some of which have storage capacity up to a billion data points.

Data acquisition platforms

Following proper definition of your data acquisition architecture, including acquisition requirements, analysis needs, and presentation considerations, the next step is to choose the data acquisition hardware platform that best meets your needs. The data acquisition systems used for industrial applications are PACs and PCI extensions for instrumentation (PXI).

PACs

PACs provide a hybrid mix of the PC and the PLC by combining features such as processor, RAM, and application software of the PC with the reliability, ruggedness, and distributed nature of the PLC. PACs also include high-level PC characteristics such as floating-point processors for custom calculations, embedded web servers for easy control and monitoring, removable CompactFlash for data logging, and Ethernet connectivity for data networking.

PACs are ideal for applications in rugged or distributed environments requiring slow acquisition rates. In general, PAC data acquisition rates are timed by software and limited to several hundred Hz. PACs are rated for up to 50 g of shock and 5 g of vibration for mobile and vibrating environments, feature CE heavy industrial electromagnetic compatibility (EMC) ratings for use in electrically noisy environments, and feature an operating temperature range of -13 F to 140 F.

Typical PACs feature high accuracy analog I/O with built-in modular signal conditioning and 16-bit resolution. Because high resolution and signal conditioning alone cannot guarantee repeatable accurate measurements, a measurement system must be calibrated against a known measurement standard. Some of the more accurate PAC systems have calibration traceability to the National Institute for Standards and Technology (NIST), formerly known as the National Bureau of Standards. Also, PACs allow direct connection to sensors such as thermocouples, RTDs, current sources, and high voltage analog outputs without additional hardware.

PACs use industry standard software that provides a wide array of functions such as scaling, SPC, curve fitting, data lookup tables, and trending. These are combined with basic components to create custom functions including linear algebra, calculus, array operations, filters, and other complex manipulations. This combination allows you to rapidly build your application using these functions, while easily implementing your own custom calculations.

Also, PACs offer a variety of tools for data logging and storage. A number of PACs on the market use nonvolatile internal flash memory and removable CompactFlash, allowing you to store more than 1 GB of data in standard DOS-compatible files. Some PACs allow you to retrieve the data using a file transfer protocol (FTP) server, or transmit the data over a phone line. Other PACs offer removable CompactFlash that allows data to be transported to a PC for further analysis. Ethernet and web connectivity are ways to share logged or real-time data from the PAC to distribute information, share reports, and even distribute control remotely.

PXI

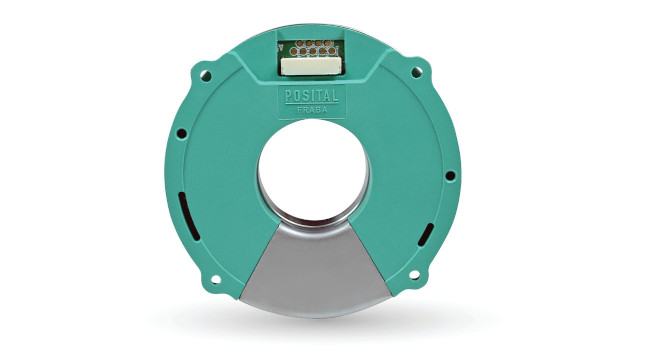

PXI was developed in 1997 to build on the modular and scalable CompactPCI platform — an industrial version of the PCI bus. Today, PXI is governed by the PXI Systems Alliance ( pxisa.org ), which is an organization of more than 60 companies offering more than 880 products for a variety of measurement applications. PXI combines the ruggedness of CompactPCI with specialized measurement features, including trigger lines and a 10-MHz reference clock for multidevice timing and synchronization.

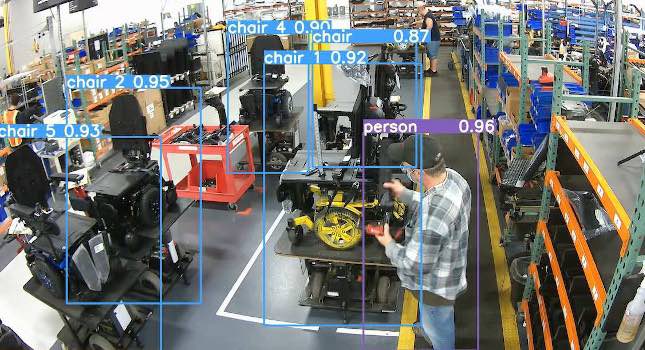

There are many products available for PXI test and measurement applications. PXI offers data acquisition products with speed and accuracy specifications ranging from 60 samples per sec (S/s) at 24 bits of precision to 1 GS/s at 8 bits of precision. General-purpose PXI data acquisition devices can accommodate up to 64 channels of analog input, 2 channels of analog output, and 32 lines of digital I/O (Fig. 3). For example, PXI offers an 8-channel, 24-bit, 110-dB dynamic range data acquisition device for precise characterization of rotating machinery.

The PXI architecture was designed for industrial test and measurement applications in rugged environments. With an operating temperature range of 32 F to 122 F, a functional shock rating of 30 g, and the capacity to withstand up to 0.3 g of vibration, PXI is much better suited for harsh operating conditions than a standard desktop PC. For transducer signals requiring special conditioning such as thermocouple measurements, strain measurements, and high voltages, specialized signal-conditioning hardware that interfaces directly with the PXI data acquisition hardware can be used.

PXI uses standard software architectures, such as Microsoft operating systems, which allows users to develop and execute software applications used on common desktop PCs. Post-acquisition analysis can be performed with a user-defined application program, such as a graphical programming language with specialized analysis features.

Like PACs, PXI has the capability of either logging data to a local storage device, or sharing data over a local area network (LAN) via Ethernet. PXI offers small computer system interface (SCSI) controller modules for data streaming to additional hard disk drives, and gigabit Ethernet modules for high-speed data transfer to network locations.

More Info:

If you have questions on choosing data acquisition platforms, contact the author. Garth Black can be reached at 512-683-0100 or [email protected] . Article edited by Jack Smith, Senior Editor, 630-288-8783, [email protected] .